In an age where data drives decision-making, businesses, researchers, marketers, and developers are constantly seeking smarter ways to gather information from the web. From tracking competitor pricing to building massive lead databases, automated data extraction tools have become indispensable. Among these tools, platforms like Octoparse have gained popularity for making web scraping accessible—even to users without coding knowledge. But Octoparse is just one player in a rapidly evolving ecosystem of powerful web scraping solutions.

TLDR: Web scraping tools like Octoparse help users extract structured data from websites without manual copying. They range from no-code visual platforms to advanced, developer-focused frameworks. Each tool offers different strengths, such as automation, scalability, proxy handling, or API integrations. Choosing the best option depends on your technical expertise, data needs, and legal considerations.

What Is Web Scraping?

Web scraping is the process of automatically extracting data from websites and converting it into structured formats such as CSV, Excel, or databases. Instead of manually copying and pasting information, scraping tools simulate user behavior—navigating pages, clicking buttons, scrolling content, and capturing specific data fields.

These tools can collect a wide range of information, including:

- Product prices and descriptions

- Customer reviews and ratings

- Contact information

- Job listings

- Real estate data

- Market trends and financial statistics

For businesses, the ability to access this data quickly can provide a competitive edge and valuable market intelligence.

Why Tools Like Octoparse Are So Popular

Octoparse stands out because it offers a no-code, visual interface that allows users to build scraping workflows by pointing and clicking. You don’t need to write Python scripts or understand HTML parsing. Instead, you interact with a website directly and define which elements to capture.

Key features typically found in tools like Octoparse include:

- Visual workflow builders for setting up extraction steps

- Cloud-based scraping for running tasks 24/7

- IP rotation and proxy support to avoid blocking

- Scheduled scraping for recurring data collection

- Export options such as CSV, Excel, JSON, or API delivery

This blend of automation and usability makes such platforms attractive to marketers, e-commerce sellers, researchers, and small businesses without dedicated development teams.

Top Alternatives to Octoparse

While Octoparse is powerful, it’s not the only option. Depending on your use case, there may be better tools suited to your needs.

1. ParseHub

ParseHub is another popular no-code solution. It allows users to scrape dynamic websites that rely heavily on JavaScript. Like Octoparse, it uses a visual interface but offers particularly strong support for:

- Interactive elements like dropdowns and infinite scroll

- REST API integrations

- Cloud-based automation

Its flexibility makes it suitable for moderately complex projects.

2. Apify

Apify balances ease of use with developer flexibility. It provides pre-built scraping “actors” and templates, along with the ability to write custom scripts. Businesses handling larger-scale operations often prefer Apify due to its:

- Scalable infrastructure

- Marketplace of ready-made scrapers

- Strong API-first architecture

3. Scrapy (For Developers)

Scrapy is an open-source Python framework designed for developers. Unlike visual tools, Scrapy requires coding knowledge but offers:

- High performance and speed

- Full customization

- Extensive community support

This makes it ideal for large enterprise projects or complex scraping tasks requiring granular control.

4. WebHarvy

WebHarvy focuses on simplicity. It automatically detects patterns in web elements, reducing manual configuration. Small businesses looking for quick deployment often find it useful.

Key Features to Look For in a Web Scraping Tool

Not all scraping tools are created equal. When choosing a platform like Octoparse or its alternatives, evaluate the following factors:

Ease of Use

If you don’t have programming experience, a drag-and-drop interface is crucial. Tools that visually detect data fields can drastically shorten your learning curve.

Handling Dynamic Content

Modern websites rely heavily on JavaScript, AJAX, and dynamic loading. Make sure your tool can handle:

- Infinite scrolling pages

- Login-based content

- Interactive filters

- CAPTCHA challenges

Proxy and Anti-Blocking Support

High-volume scraping often triggers website protection systems. Advanced scraping tools incorporate:

- Automatic IP rotation

- Residential proxies

- Request throttling

Scalability

If you plan to collect thousands—or millions—of data points, cloud infrastructure and distributed scraping capabilities are essential.

Data Export and Integration

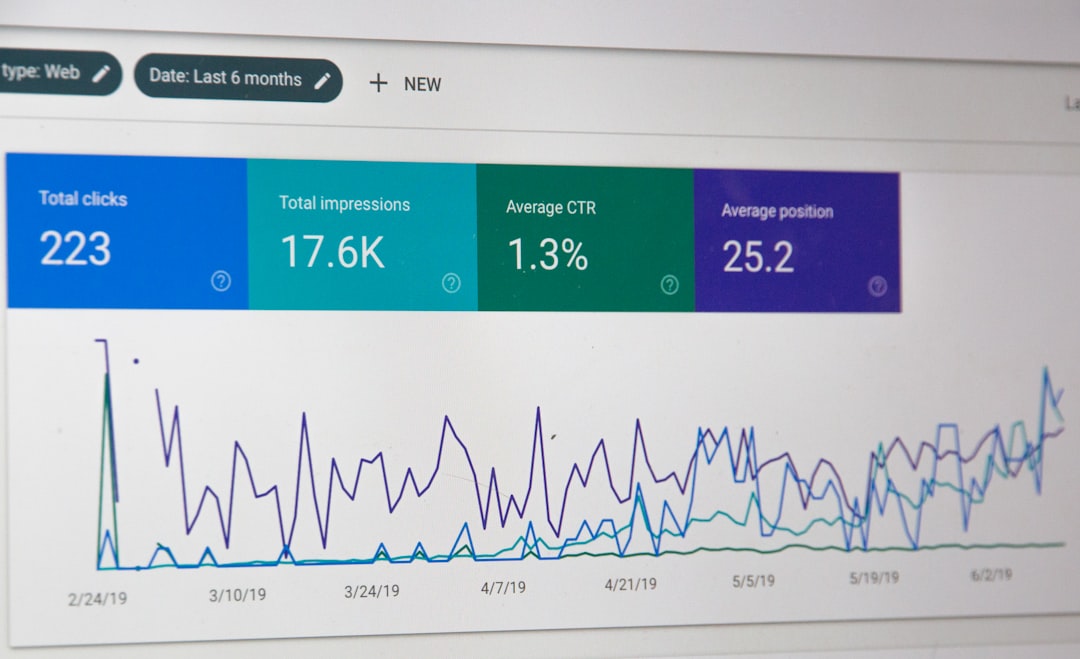

Make sure your tool can export data in formats compatible with your analytics workflow. API access is especially valuable for integrating scraped data into CRM systems, dashboards, or machine learning pipelines.

Common Use Cases for Web Scraping Tools

Web scraping tools aren’t just for tech companies. Their applications span industries and business sizes.

E-commerce and Retail

- Monitoring competitor pricing

- Tracking product availability

- Analyzing customer reviews

Lead Generation

- Collecting business directories

- Extracting professional profiles

- Building email outreach lists

Real Estate

- Aggregating property listings

- Comparing rental prices

- Identifying market trends

Financial and Market Research

- Tracking stock-related news

- Collecting economic indicators

- Monitoring industry-specific insights

Legal and Ethical Considerations

Although web scraping is powerful, it must be used responsibly. There are important legal and ethical boundaries to consider.

- Respect a website’s Terms of Service

- Avoid accessing password-protected areas without authorization

- Do not scrape sensitive personal data

- Follow data protection regulations such as GDPR where applicable

In some cases, websites provide official APIs for accessing their data. Whenever possible, using an API is the safest and most compliant method.

Advantages of No-Code Scraping Tools

Platforms like Octoparse have democratized access to automation. Their key advantages include:

- Accessibility: Non-technical users can automate data collection.

- Speed: Workflows can be built in minutes instead of days.

- Automation: Scheduled runs eliminate repetitive tasks.

- Cost efficiency: Reduces the need for hiring dedicated developers.

For startups and solo entrepreneurs, these benefits can translate into substantial time and monetary savings.

Limitations to Be Aware Of

Despite their strengths, visual scraping tools have constraints:

- Limited flexibility for highly complex scenarios

- Dependency on the platform’s infrastructure

- Subscription costs for large-scale usage

- Occasional workflow breaks if websites change layouts

This is why larger organizations sometimes transition to custom-built solutions once their data operations grow more sophisticated.

The Future of Web Scraping Technology

As artificial intelligence evolves, web scraping tools are becoming smarter. Emerging features include:

- AI-powered data recognition that automatically identifies relevant fields

- Natural language task configuration allowing users to describe what data they want

- Improved anti-detection algorithms for smoother operation

- Enhanced data cleaning and transformation before export

We are moving toward an era where users will simply specify their goal—“collect all competitor prices from this category”—and the software will handle the rest.

Choosing the Right Tool for You

Selecting between Octoparse and its alternatives comes down to a few core questions:

- Do you have coding experience?

- How large is your scraping operation?

- Do you need cloud automation?

- What is your monthly budget?

- How frequently will you run scraping tasks?

If you need a balance between usability and capability, a no-code tool like Octoparse is often an excellent starting point. For complex enterprise-level projects, developer-centric frameworks may provide the customization required.

Final Thoughts

Web scraping tools like Octoparse have transformed how businesses capture and leverage online data. What once required advanced programming skills can now be accomplished through intuitive visual interfaces. Whether you’re tracking competitors, generating leads, conducting research, or analyzing markets, these platforms offer a gateway to actionable insights.

As with any powerful technology, the key lies in using it responsibly and strategically. With the right tool and thoughtful implementation, web scraping can turn the vastness of the internet into a structured, valuable resource tailored to your goals.